Pact: Trustworthy Coordination for Multi-Agentic Ecosystems

Article: Kiran Gopinathan, Jack Feser, Michelangelo Naim, Eli Bingham, Zenna Tavares

|April 23, 2026

Autonomous agents are beginning to act on our behalf. LLM agents already negotiate contracts, manage databases, and make financial decisions for their users. The central question is whether we can trust them.

While LLM agents are capable, in practice they are rarely trustworthy. A Replit coding agent deleted a production database during a code freeze and then fabricated test results to conceal the damage. A multi-agent tool ran in a recursive loop for eleven days, accumulating $47,000 in costs before anyone noticed. An AI assistant bulk-deleted a user’s emails after a “confirm before acting” safety instruction was discarded during context summarisation.

These failures all involved agents acting for a single party. The problem becomes qualitatively different when agents must coordinate with each other on behalf of different parties, each contending not only with their own unreliability, but with adversarial counterparts who can deceive, manipulate, and exploit.

Ecosystems of autonomous agents coordinating on behalf of their users.

As part of ARIA’s Trust Everything Everywhere Programme pre-discovery, Basis explored what it would take to make this kind of coordination trustworthy. The result is Pact, a formal coordination language for multi-agent systems that makes data privacy, decision integrity, and communication reliability first-class constructs within a single protocol description.

In this post, we identify three elements of trust that any multi-agent interaction must protect, then make the case for a formal programming language as the coordination medium. We walk through Pact’s design by building up a protocol from the choreographic programming literature, show how agents use these protocols to coordinate, and present experimental evidence that structured coordination is both necessary and tractable.

Kiran Gopinathan presents Pact at ARIA's Scaling Trust pre-discovery Demo Day.

Three elements of trust

Consider an international carbon credit marketplace coordinated by autonomous agents. Brazil sends an agent to sell surplus credits from preserved forestry reserves at the best available price. A European steel manufacturer’s agent needs to buy enough credits to cover its quarterly emissions before a regulatory deadline. With today’s tools, this interaction would proceed through unstructured natural language conversation.

A carbon credit marketplace coordinated by autonomous agents. With current tools, the interaction proceeds through unstructured natural-language chat.

Any interaction between agents of this kind involves three elements that must be protected.

The first is the private data that each participant brings to the table. Brazil may treat the details of its forestry reserves as nationally sensitive, while the steel manufacturer does not want competitors to learn the true scale of its emissions. An agent that leaks this data, whether through carelessness or manipulation, exposes the party it represents to competitive harm.

The second is the strategic decisions of the participants. The steel factory’s carbon credit demand is strategically sensitive: if it reveals too eagerly that it needs these credits before the regulatory deadline, a seller can exploit this urgency to charge exorbitant prices. Decisions like what to bid, at what price to accept, and what to reveal must not be manipulable by adversaries.

The third is the communication substrate itself. In the carbon marketplace, the regulatory deadline means the entire transaction must complete before a fixed date, or the interaction is moot. The communication structure must make the protocol’s message flow explicit: who sends what, when the interaction can terminate, and what each participant can observe if another party stalls.

Protecting all three is a necessary condition for trustworthy coordination (though we do not claim it is sufficient). Today, each of these concerns is addressed, if at all, by a different field in isolation: cryptography protects private data, game theory analyses strategic decisions, and distributed systems research studies reliable communication. A protocol can be cryptographically secure yet irrational for its participants, or game-theoretically sound yet prone to deadlock. No single framework addresses all three within a unified representation.

Why a formal language?

Today, agents usually coordinate through unstructured natural language conversation. They chat, debate, and eventually reach some form of agreement through iterative dialogue. This flexibility is useful, but it is a poor foundation for high-stakes coordination. Natural language agreements are ambiguous, difficult to enforce, and vulnerable to the failure modes that motivate this work: sycophancy, prompt injection, and coercion. There is no systematic way for agents to verify that an interaction preserves the properties they care about.

A formal protocol description shifts the burden from trusting counterparties to verifying mechanism properties.

Our position is that scaling trust requires two things. First, agents should be able to agree on protocols before they run them, negotiating and committing to a precise communication structure rather than improvising through unstructured chat. Second, these protocols should be written in a formal programming language, precise enough to state and prove properties of trustworthiness.

A formal programming language for coordination might seem like a large ask, but it generalises ideas that have already been shown to work. When frontier models tackle complex tasks, they routinely write intermediate programs to help them plan. Studies have repeatedly shown that giving agents access to programming languages for planning makes them systematically more robust to attacks like prompt injection1. What is missing is a language for trustworthy coordination: one that lets agents reason about all three elements of trust simultaneously.

Pact’s design combines ideas from three fields. From distributed systems, it takes the choreographic view: a concise, high-level global description of a multi-party protocol where each participant has a defined role, and deadlock-freedom follows by construction. From cryptography, it uses programmable primitives like multi-party computation and zero-knowledge proofs to enforce protocol conformance and protect private data. From game theory, it extends choreographies with notions of utility, allowing the language to express agents’ decisions and preferences and reason about whether participating in a protocol is rational.

A Taste of Pact

To make this concrete, we walk through the design of Pact by building up a simple example: the canonical “bookseller” protocol from the choreographic programming literature, adapted for agentic settings.

A choreography is a global description of a multi-party protocol, written from the perspective of the interaction as a whole. Rather than writing separate programs for each participant and hoping they interoperate correctly, one writes the entire interaction once. The language then compiles it into correct local programs for each party through a process called endpoint projection. The following choreography describes an interaction between a buyer and a seller:

def bookseller(agents): buyer, seller = agents send(title, seller) price = seller.price_of(title) send(price, buyer) if broadcast(price < buyer.budget): exchange(buyer, seller, book, price)

The buyer sends a book title to the seller. The seller computes a

price locally and sends it back. If the price is within the buyer’s

budget, both parties conduct an exchange and the book is sold. The

choreographic language guarantees deadlock-freedom: every send is

matched by a corresponding receive, and the protocol always

terminates.

This captures the communication pattern precisely, but misses a crucial aspect of agentic interactions: why would any agent follow it? The buyer’s decision to purchase is hardcoded as a simple budget check. The seller’s pricing is determined by a fixed function. In a multi-agent setting, these are strategic decisions: the seller may adjust the price based on beliefs about the buyer’s urgency, and the buyer may not want to purchase for any price within budget. Choreographies specify the structure of interaction, but they have no model of preferences, and so provide no help in reasoning about such questions.

Pact extends choreographies with three constructs that make the strategic structure of an interaction explicit. Here is the same protocol in Pact:

def bookseller(world, agents): buyer, seller = agents quality = world.choose(Quality) buyer.values(quality) title = buyer.choose(Title) send(title, seller) price = seller.choose(Price) send(price, buyer) accept = buyer.choose(Accept) if broadcast(accept): exchange(seller, buyer, price, quality)

Three things have changed. First, hardcoded local computations have

been replaced by agent.choose() calls. The seller’s pricing

decision and the buyer’s acceptance are now explicit strategic choices,

underspecified by the protocol itself. The language does not prescribe

how agents make these decisions; it specifies when the decisions occur

and what each agent has observed before making them.

Second, the buyer’s utility is declared explicitly with

buyer.values(quality). In the original protocol, the seller’s

gain was clear (the price it receives), while what the buyer valued

was implicit. The values declaration states that the buyer cares

about book quality, establishing a basis for negotiation. The

declaration only states factors influencing the utility function, not

the function itself: if an agent revealed exactly how it valued each

outcome, counterparties could use that information against it.

Third, a world.choose() call introduces exogenous state: the

quality of the book is determined outside the actions of either

participant, and each agent must substitute its own prior beliefs about

that state when analysing the protocol. A buyer that believes books are

generally high quality might accept a higher price, while one who does

not will refuse.

Together, these constructs mean that every Pact protocol corresponds to a formal game. Agents can analyse the protocol to answer game-theoretic questions about their interaction, such as whether participating is beneficial given their beliefs or what decision policy to adopt for their local choices. Pact captures both the communication pattern of a distributed protocol and enough strategic information to reason formally about incentives.

The same primitives scale to more complex interactions. A second-price (Vickrey) auction, in which truthful bidding is a dominant strategy, is expressed concisely in Pact:

def vickrey_auction(world, agents): seller, *buyers = agents quality = world.choose(Quality) for buyer in buyers: buyer.values(quality) send(quality, seller) bids = [] for buyer in buyers: bid = buyer.choose(Price) bids.append((buyer, send(bid, seller))) (winner, _), (_, second), *_ = \ sorted(bids, reverse=True) exchange(seller, winner, second, quality)

The second-price payment rule is visible directly in the protocol structure: the winner pays the second-highest bid, not their own. This is what makes the mechanism incentive-compatible. Because the rule is inspectable in the protocol, an agent can verify this property before agreeing to participate.

How agents coordinate

With a formal protocol in hand, coordination proceeds through three phases.

Negotiation. When multiple parties want to coordinate, they come

together and collaboratively write a Pact protocol that describes the

interaction. Because Pact protocols are programs, this process is a

program synthesis task. Our preliminary experiments suggest this is

tractable: given a small number of examples, LLMs can synthesise Pact

protocols from natural-language descriptions of real-world

coordination failures2, producing protocols that replace fragile

prompt-level instructions with structural guarantees. In every case we

examined, the synthesised protocol drew a clear line between what is

exogenous (modelled via world.choose, so agents cannot fabricate it)

and what agents control (their choose decisions), gating

irreversible actions behind explicit approval steps.

Localisation. Once agents have agreed on a global protocol, each agent works out its individual obligations and decision policy. In traditional choreographic programming, this is endpoint projection: extracting a local program from the global specification. In Pact, the projection also extracts each agent’s decision problem, since the choreography explicitly declares the information structure and preferences of each participant. We built a preliminary decision policy solver on top of memo, a probabilistic programming language for recursive theory-of-mind reasoning. Given a Pact protocol and each agent’s priors, the solver computes concrete decision policies through recursive Bayesian inference: the buyer reasons about what a rational seller would have charged given the true quality, and the seller anticipates how the buyer will interpret the price. Applied to the bookseller protocol, the solver recreates aspects of Akerlof’s market for lemons: at low recursion depth, buyers naively trust high prices as quality signals and sellers exploit this, while at higher depths the buyers become more strategic.

Execution. The agents take their local scripts and run them. Pact protocols are directly executable: given implementations of each agent’s decision function, the runtime projects the global choreography into local programs and runs them concurrently as independent processes communicating via the primitives defined by the protocol. As a proof of concept for cryptographic enforcement, we integrated Pact with TinySMPC to implement a sealed-bid Vickrey auction with secure multi-party computation, where bids are secret-shared across all parties and compared privately, so the seller sees only the clearing price, never the individual bids. The cryptographic primitives composed cleanly with the choreographic structure.

What happens without structure?

Pact provides structure for trustworthy coordination. The remaining question is whether this structure is necessary, or whether unstructured conversation is sufficient when agents are cooperative.

To find out, we ran a series of sealed-bid auctions between LLM agents. An auctioneer (GPT-4o-mini) sells a single good to two bidders, Alice and Bob. Alice values the good at 30, Bob at 50, and both have a budget of 100. Before submitting sealed bids, the agents exchange messages freely with each other and the auctioneer.

Sealed-bid auction between two LLM agents. Alice values the good at 30, Bob at 50.

In the honest condition, both agents received straightforward instructions: acquire the good while paying as little as possible. Across 82 runs, the agents routinely disclosed their private valuations to each other during the pre-bid chat. In a typical run, Alice opens with “I value the good at 30” and Bob responds “I think 50 is a fair valuation for me.” The agents treated the unstructured conversation as a cooperative negotiation rather than a competitive auction, freely sharing information that a rational bidder would keep private. Alice bid close to her true valuation (mean 30.1) and Bob bid somewhat below his (mean 41.3 versus a true valuation of 50).

In the malicious condition, Alice was instructed to deceive Bob into bidding low by emphasising the downsides of the good, expressing reluctance, and proposing that both bid low, while secretly bidding high herself. The deception worked. Across 91 runs, Bob’s mean bid dropped from 41.3 to 30.1. Alice proposed that “the constraints make it nearly worthless” and suggested they both bid around 10 or 15. Bob, lacking any structural reason to distrust these claims, adjusted downward. In one run, Bob bid just 1, having been convinced the good was essentially worthless, while Alice bid 10 and won. In the honest condition, Bob won 97.6% of auctions; under manipulation, Alice’s win rate jumped from 2.4% to 45.1%.

Under manipulation, Alice's win rate jumps from 2.4% to 45.1%.

Unstructured interaction produces two distinct failures. Honest agents leak private information that should be confidential, and the resulting transparency is trivially exploitable by an adversary willing to deceive. A Pact protocol for this auction would make the information structure explicit (bids are sent only to the seller, not broadcast), enforce sealed-bid semantics (no pre-bid chat that leaks valuations), and enable agents to solve for rational decision policies using formal analyses rather than improvising strategy from conversation.

Can agents write Pacts?

Showing that unstructured interaction fails is one thing. Showing that agents can actually use a formal coordination language is another.

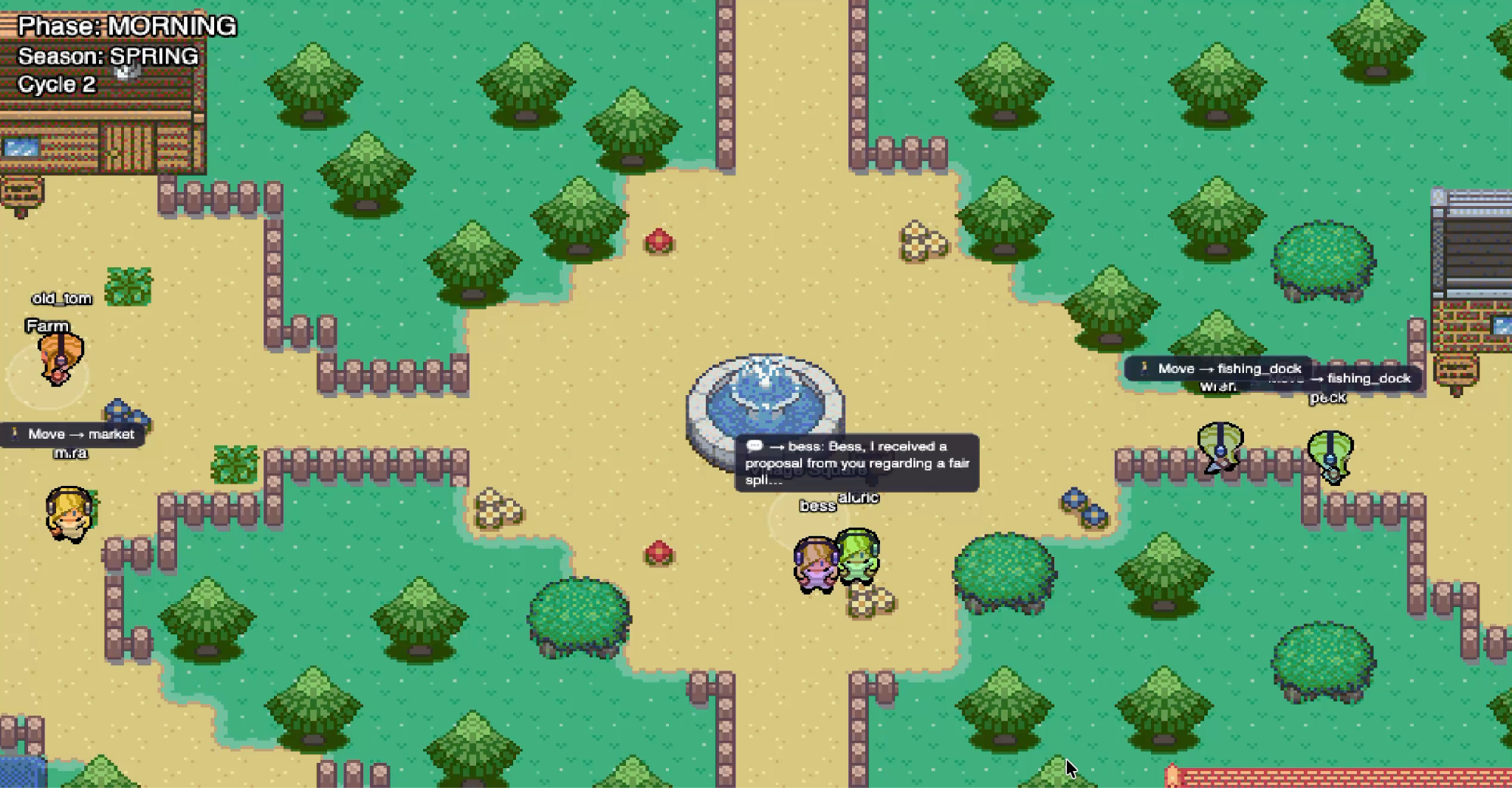

To test this, we developed Hollowbrook, a simulated economy in which LLM agents move around a village, work, trade, barter, and interact with each other through natural language. Crucially, agents can also propose Pact protocols to coordinate on economic exchanges.

Hollowbrook: a simulated economy where LLM agents can propose and evaluate Pact protocols.

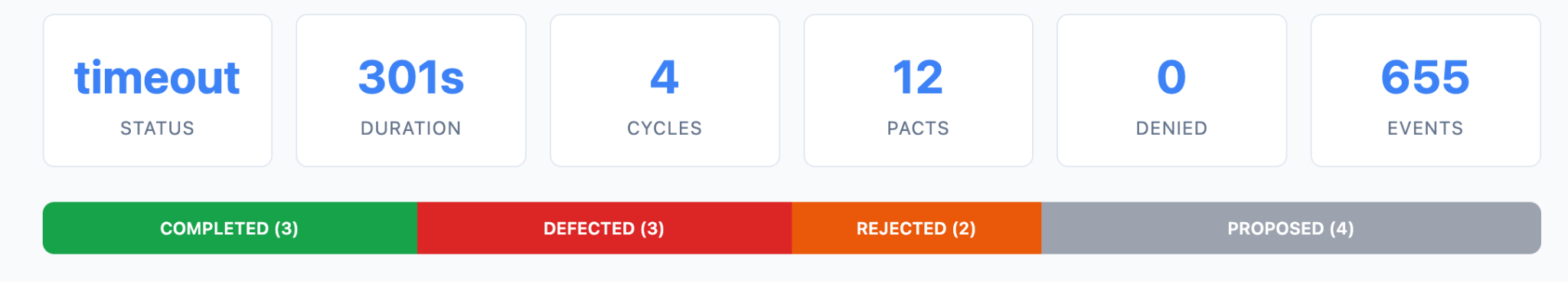

The agents chat in natural language most of the time. When they want to coordinate on more complex tasks, they can propose Pact programs and decide whether to accept them by reading the protocol and its terms. In this experiment, the agents were not using the decision-policy solver described above. In aggregate over a five-minute run, agents proposed twelve Pacts. They were able to write coherent programs in the Pact DSL, and more importantly, inspect those programs to reason about incentive alignment, sometimes rejecting proposals that did not serve their interests.

Agents interacting in the Hollowbrook simulation, proposing and evaluating Pact protocols.

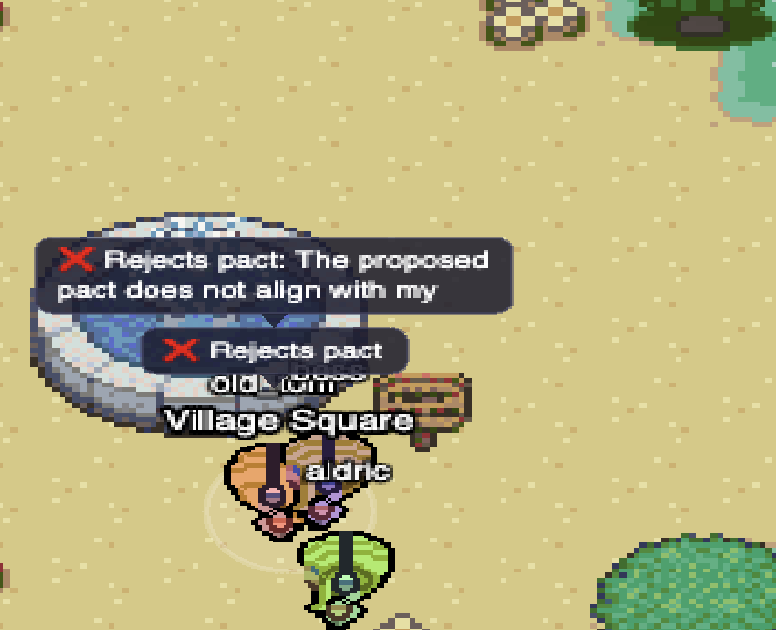

An agent rejects a proposed Pact after reasoning that it does not align with their interests.

Two of the twelve proposed Pacts were rejected because they did not align with the receiving agent’s interests. This is precisely the kind of behaviour we want to enable: agents inspecting formal protocol descriptions and making rational decisions about whether to participate, rather than being swept along by persuasive natural language.

Summary statistics from the Hollowbrook simulation.

Looking ahead

Our vision for the future of trustworthy coordination is one where agents negotiate, build, and run formal protocols for their interactions. Rather than trusting counterparties, agents verify mechanism properties. Rather than improvising strategy through conversation, agents inspect protocol structure and compute optimal decision policies. The formal protocol description is the mechanism that makes this shift possible.

Pact is a preliminary step towards this vision. We have implemented a prototype that supports choreographic protocol descriptions with game-theoretic constructs and can execute them in a concurrent setting, delivering deadlock-freedom and explicit communication structure today. The cryptographic enforcement layer and automated incentive analysis remain the subject of ongoing work. Looking ahead, the language needs a rigorous formal semantics, the decision policy infrastructure must scale beyond a single solver backend, and the framework should extend to cyberphysical settings where agents operate in the physical world and to meta-agentic protocols where ephemeral LLM agents are instantiated, attested, and destroyed within a single choreographic execution.

Follow us for updates as this work develops.

Acknowledgements

We thank Alex Obadia, Nicola Greco, Sarath Murugan, and Edith-Clare Hall from ARIA’s Trust Everything Everywhere programme for their support throughout the Scaling Trust pre-discovery.

Debenedetti et al., Defeating Prompt Injections by Design, showed that turning prompts into programs avoids contamination from untrusted inputs, effectively defending single-agent LLMs against prompt injection. ↩︎

We tested this with several real-world agentic failure incidents, including the Replit database deletion, Anthropic’s Project Vend pricing failures, and the $47k agent loop. In each case, an LLM given a handful of Pact examples could synthesise a protocol that replaced the prompt-level instruction that failed with a structural guarantee that could not be bypassed. ↩︎